Part 1: Computer Vision and Deep Learning

- Finding lane lines

- Workflow for lane detection pipeline:

- Load video -> Greyscale transform ->Gaussian smoothing -> Canny edge detection -> select the region of interest -> Hough transform -> Draw lines on original video

- Workflow for lane detection pipeline:

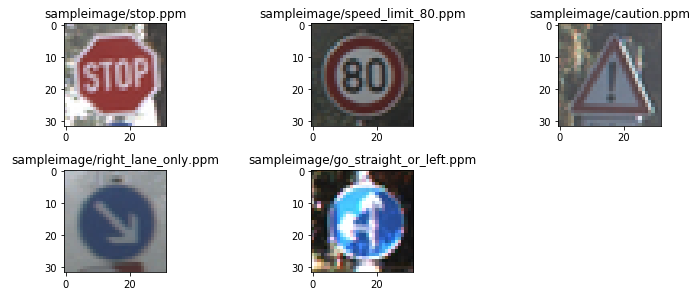

- Traffic sign classifier

- Datasets: German Traffic Sign Recognition Benchmark

- Three layers neural network including a fully connected layer

- Test accuracy: 0.935

- Advanced lane finding

- Workflow:

- Compute the camera calibration matrix and distortion coefficients given a set of chessboard images.

- Apply a distortion correction to raw images.

- Use color transforms, gradients, etc., to create a thresholded binary image.

- Apply a perspective transform to rectify binary image (“birds-eye view”).

- Detect lane pixels and fit to find the lane boundary.

- Determine the curvature of the lane and vehicle position with respect to center.

- Warp the detected lane boundaries back onto the original image.

- Output visual display of the lane boundaries and numerical estimation of lane curvature and vehicle position.

- Workflow:

- Vehicle detection and tracking

Part 2: Sensor fusion, Localization, and control

Extended Kalman filter

- Uncented Kalman Filter

- Kidnapped vehicle

- PID controller

- Model predictive control

Part 3: Path planning, concentrations, and system integration

- Path planning

- Semantic segmentation

- Functional safety

- Systemintegration